Media negotiation

This section explains how a participant can negotiate its audio and video streams when joining a conference room. The process has two steps: one negotiation for the audio stream and another negotiation for the video stream.

When a user joins a conference room, several media connections are established one for receiving and sending audio, one to send video and then one for each of the participants in the conference publishing video. Each media connection is called a PeerConnection in WebRTC terminology. WebRTC uses SDP to negotiate the parameteres of the media connection. SDP requires an offer with the supported media parameters such as codecs, encryption and ICE candidates (which helps to discover the IPs which are going to be used for send and receive media). The media negotiation can be easily debugged with chrome://webrtc-internals when using the Javascript SDK in Google Chrome. All the negotitation happens using the Websocket connection against the Quobis WAC, and it is automatically routed by the internal websocket gateway to the right video SFU.

Audio negotiation

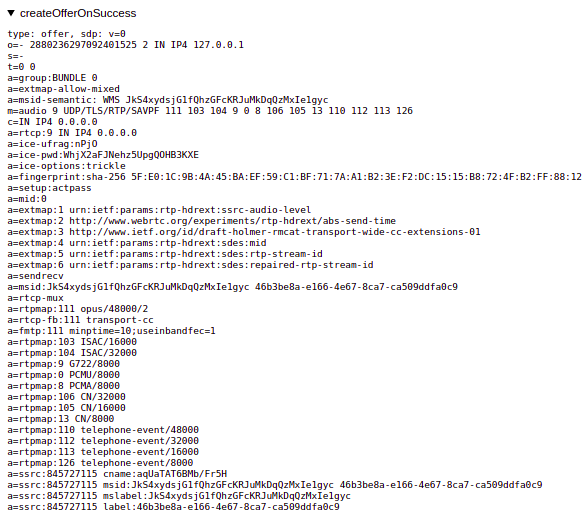

As mentioned above, the audio negotiation only happens onceat the begining of the call and the Peerconnection remains until the user leaves the conference. The PeerConnection for audio is started by the SDK (both web and mobile) which generates a WebRTC compliant SDP offer. This offer is sent to the SFU using the Websocket connection.

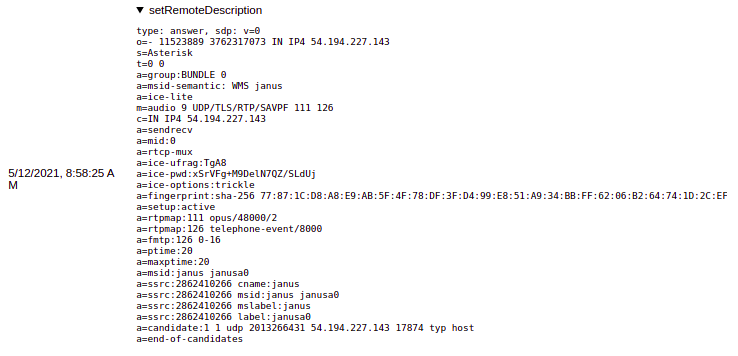

The audiomixer is the element which decides which codec to use. The SFU sends the SDP offer to the audiomixer the which processes the offer and generates an SDP reply. By default, Opus codec is replied as the first option but it can be changed in the audiomixer configuration. Once the SDP is answered, the Peerconnection tries to get established.

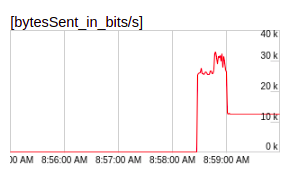

The establishment of the media connection will only fail if there is not connectivity which would only happen when the user is behind very restrictive firewalls or HTTP Proxy and the TURN server is not properly configured. Please note that when the audio is muted, the peer connection keeps sending data but a very low rate. This is different for the video, when the video is muted the PeerConnection is closed and a new one is created when the video is unmuted.

Video negotiation

In each conference there will take place several video negotiations:

One negotiation for the video being published by the user.

One negotiation for each of the participants in the conference which publish video.

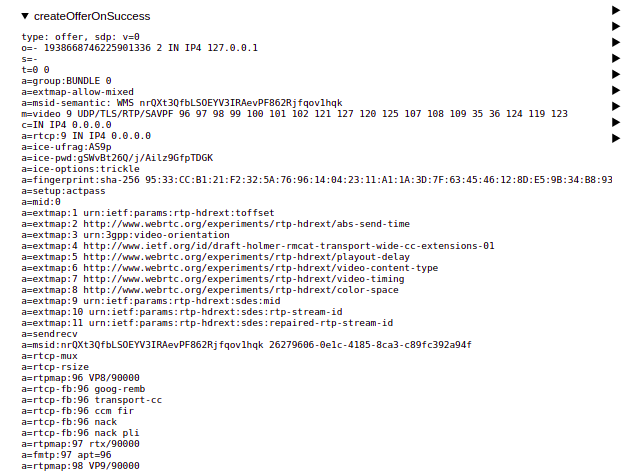

The video negotiation happens between the SDK and the SFU. There are differences in the negotiation when the SDK is publishing the video from the local cameras and when the SDK is subscribing to the video of other participants. When the SDK is pubslihing video, the SDK sends an SDP offer including all the video codecs suported by the SDK (they are not included in the screenshot below).

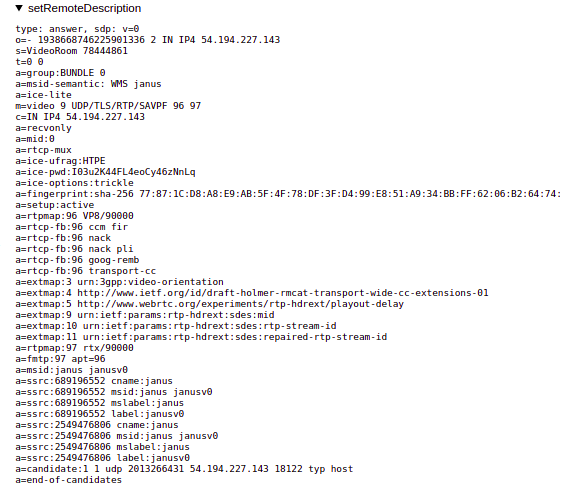

The SFU replies with an SDP answer which defines the video codec to be used. So the codec to be used in the conference need to be configured in the SFU. Currently VP8 and H264 are supported.The codec included in the SDP answer is the going to be used for the video.

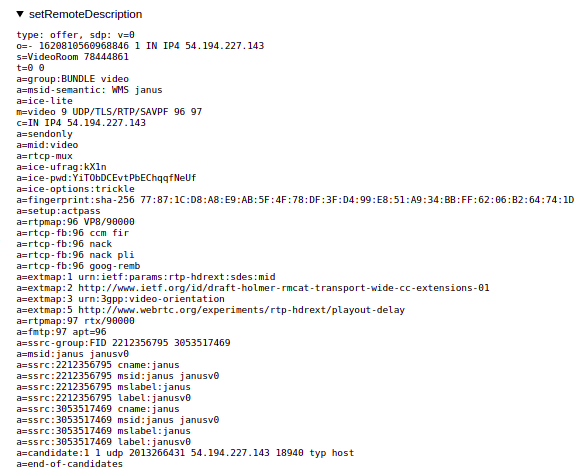

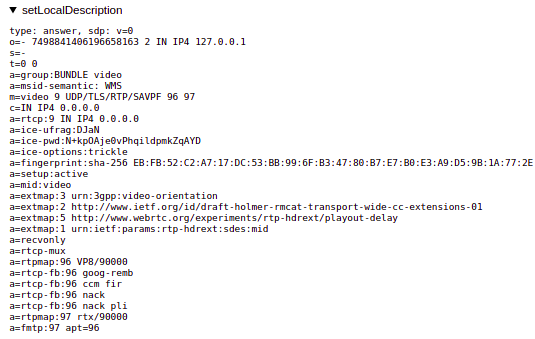

In the case of the subscription to remote videos from other participants, the SFU sends an offer (including only the video codec configured in the SFU) and this is answered by the SDK. There will one of these PeerConnection negotitations per participant.

Since the offer includes just one video codec (the one configured in the SFU), the answer must include just that video codec which will be the one used to receive the video flow.

Note

Please note that only one video codec can be used in a given conference room. Every participant will use the same video codec.